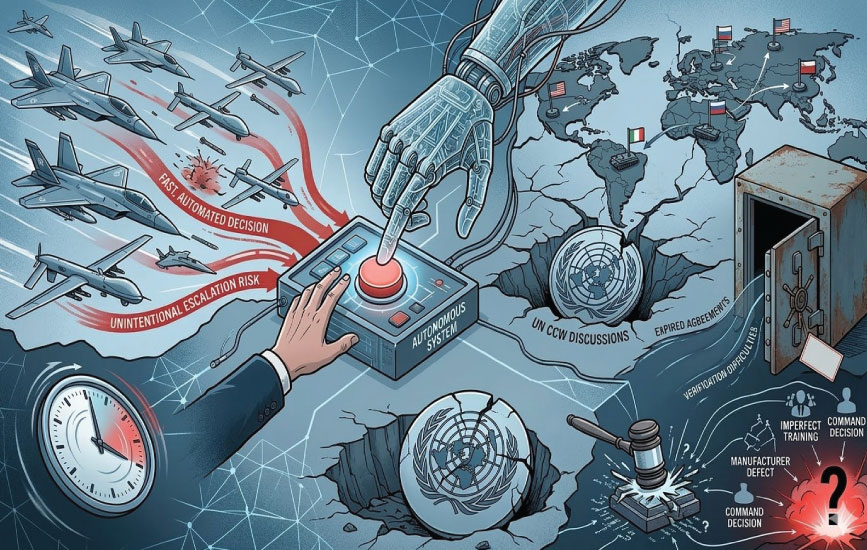

AI’s dual-use, software-based nature makes physical verification and accountability nearly impossible. This algorithmic warfare is dangerous because fast, automated decisions can accidentally trigger a war before humans can stop it. While the UN advocates for strict rules to keep humans in charge, powerful nations prefer making their own choices.

By 2026, discussions on lethal autonomous weapons systems (LAWS) had evolved into active diplomacy from just being a theoretical issue. The states at the United Nations negotiate under the United Nations Convention for Certain Conventional Weapons (CCW), whereas the United Nations Secretary-General has called these weapons, which operate in the absence of human control, “politically not acceptable and morally detestable.” This shows institutional attention, but there are no particular legal bindings that can regulate AI-enabled weapons. In this way, a similar paradox emerges: global governance frameworks exist, but in practice, they do not possess the essential capacity to restrain arms competition.

This is not something new; nuclear governance provides a meaningful comparison. The Non-Proliferation of Nuclear Weapons treaty provides a sustainable framework with verification mechanisms and membership, which is almost universal. New START put a numerical cap on the development of strategic arsenals by the US and Russia; the treaty also provides a verification mechanism to both sides. But its subsequent expiration in February 2026 without any successor agreement to replace it shows that institutional existence cannot enforce compliance. Moreover, major powers prioritize modernization of their weapons instead of limiting them; these military powers cannot be made to comply with any supranational authority. This means governance requires voluntary adherence, not binding global enforcement.

The Governance Vacuum in Autonomous Decision-Making

This structural gap is perfectly exposed by the autonomous weapons. In contrast to nuclear weapons, LAWS are not regulated by any dedicated regime. The debates among the experts within CCW groups are focused on “meaningful human control”; the states, however, have not agreed upon setting legal bindings. Major powers rely more on national policies or political declarations instead of committing to the treaties. Meanwhile, the majority of other states support binding instrument negotiations. As is evident, the diplomacy continues, but the regulation remains at a halt.

The supporters argue that the AI-controlled autonomous systems can evade the errors on the battlefield; they say that machines do not act out of emotions or vengeance, and they process sensor data fast, which can ultimately help in the precision of hitting a target. The argument is based on the prior support for automation in delivery systems and missile defense. Accuracy and speed are seen as reliable in strengthening deterrence.

AI-Accelerated Conflicts and Escalation Risks

However, the risks with these systems are unprecedented. The United Nations Institute for Disarmament Research (UNIDIR) and other research bodies have identified issues related to autonomous systems, which include accountability, predictability, and explainability of events carried out by these systems. Machine learning systems can be unpredictable when exposed to manipulated or firsthand data. Training the delivery systems cannot fully adapt to the real combat scenarios. These systems are also prone to defects such as data manipulation, spoofing, or any other technical faults. Under such risks, there are high chances of unintentional escalation.

Similar concerns are being raised by the strategic analysts. Research at the Center for a New American Security (CNAS) and Stockholm International Peace Research Institute (SIPRI) has warned that these AI systems in command and control may shorten the time of decision-making during crises. However, if decision-making becomes so fast-operated at machine speed, the chances of misinterpretation and false signals can trigger escalation. Fast decisions do not correlate with stability.

Complex Regulation and Attribution of Responsibility

Another challenge is the verification mechanism; as of now, no global organization has the tools to inspect the AI source code, confirm compliance, or review the data to train the models with “meaningful human control.” Nuclear arms control regulates warheads, tracking materials, and facility inspections. Whereas the AI systems are software-based and often dual-useable. The same algorithms are used in civilian and military settings, making the enforcement and monitoring difficult.

With AI-based command and control, where human control is near none, it is hard to say who is responsible if the system conducts an unlawful strike. It will be hard to point out a single actor when there are many; for example, it will be difficult to say if the problem was a manufacturing defect or whether it was caused by an imperfect training of the model. It will be harder to hold states accountable when such ambiguity exists. International organizations have frameworks that can hold states and humans accountable for their actions, but such opaque algorithms complicate attribution. This accountability deficit weakens the enforcement even more. International institutions can ask for the development of regulatory mechanisms for these problems, but gaining the consent of major powers is difficult.

The main issue in implementation is not with the frameworks but with the state’s responsibility. The national security policies of states influence their arms development. International institutions provide norms and forums, but their capacity cannot reach the political commitment. Arms control regimes become ineffective when the major powers do not comply with the limitations or fail to renew them. It is very important to know that the emerging technologies are advancing faster than the regulatory process. By the time actors agree on rules and definitions, technology may have developed more. Thus requiring advanced regulations.

Autonomous weapons entail this pattern. Technology develops faster than reaching a multilateral consensus. This depicts strategic competition in a multipolar world where states want to achieve an advantage in technology. This dynamic cannot be regulated by the international organizations alone.

Global governance is important. UN proposals, discussions, and support help in shaping norms, but effective strategic restraint is possible through state action. National policies must incorporate measures to cater to mechanical defects, make human control over critical decisions essential, and ensure transparency and accountability to minimize miscalculation.